Introducing KnitKnot

Kevin Kho · Co-founder, KnitKnot

· 5 min read

A few months ago I realized I’d stopped comparing tools on Google. Every time I needed something new, auth, vector DB, transactional email, I just asked Claude what to use. Then I signed up for whatever it told me. No demo, no sales call, no shortlist of three vendors to evaluate.

I started asking around. Most of the engineers I talked to were doing the same thing. So were a lot of the founders. The buying conversation that used to happen on a sales call now happens in a single prompt, before the vendor knows it’s happening at all.

That’s what KnitKnot is for.

How we got here

We started KnitKnot building a digital sales room. Same lane as Aligned, Dock, and Seismic. Better deal collateral, better mutual action plans, better buyer experience. It was a real problem, but every conversation kept landing in the same place: nice to have, not urgent.

The interesting thread was a smaller one. A handful of companies selling to engineers told us their buyers hated being on sales calls. The reps hated it too. The question that came out of those conversations was whether we could facilitate a rep-less buying experience. Could a champion come in, figure out what they needed, pitch it internally, and close, without anyone on the vendor side getting involved?

We put it on the roadmap as a feature. It didn’t feel like the company.

Then I noticed I was already buying tools that way. Almost everyone I asked was. We weren’t building for a hypothetical future. We were building for what we were already doing ourselves.

So we made it the whole product.

The question that locked us in

Once you accept that buyers are asking AI before they’re asking you, a different question shows up. I think most founders haven’t sat with it yet.

If an agent landed on your website today, what would you want it to see in order to buy?

Not a human visitor. An agent with thirty seconds and a directive to compare you against two competitors. What’s on your pricing page that helps it decide? What’s in your docs? What does the comparison article ranking second for your category say about you?

The fully agentic version of this isn’t speculative. Every piece already works somewhere. The distance between “Claude tells me which auth provider to use” and “Claude signs me up for it” is shorter than it looks. I’m honestly not sure about the timeline. Twelve months feels aggressive, thirty-six months feels conservative. But the question is the same either way. For a growing share of B2B, the buyer isn’t a human, and most companies are still writing for the version of the buyer they’re used to.

A channel you can’t see

I think this is what makes AI different from any acquisition channel B2B has had before.

Every previous channel left a trail. SEO has Search Console. Paid has the ads dashboard. Outbound has the CRM. Events have badge scans. Even word of mouth shows up in “how did you hear about us.” If you cared to look, you could see what was happening.

AI doesn’t work that way. The conversation happens off your property, leaves no logs, and reaches the buyer with a position already formed. By the time they show up on your site, the model has told them who you are, who you aren’t, and who they should compare you to. The first impression has been made without you.

What KnitKnot does

That led us to the MVP of KnitKnot. Three parts:

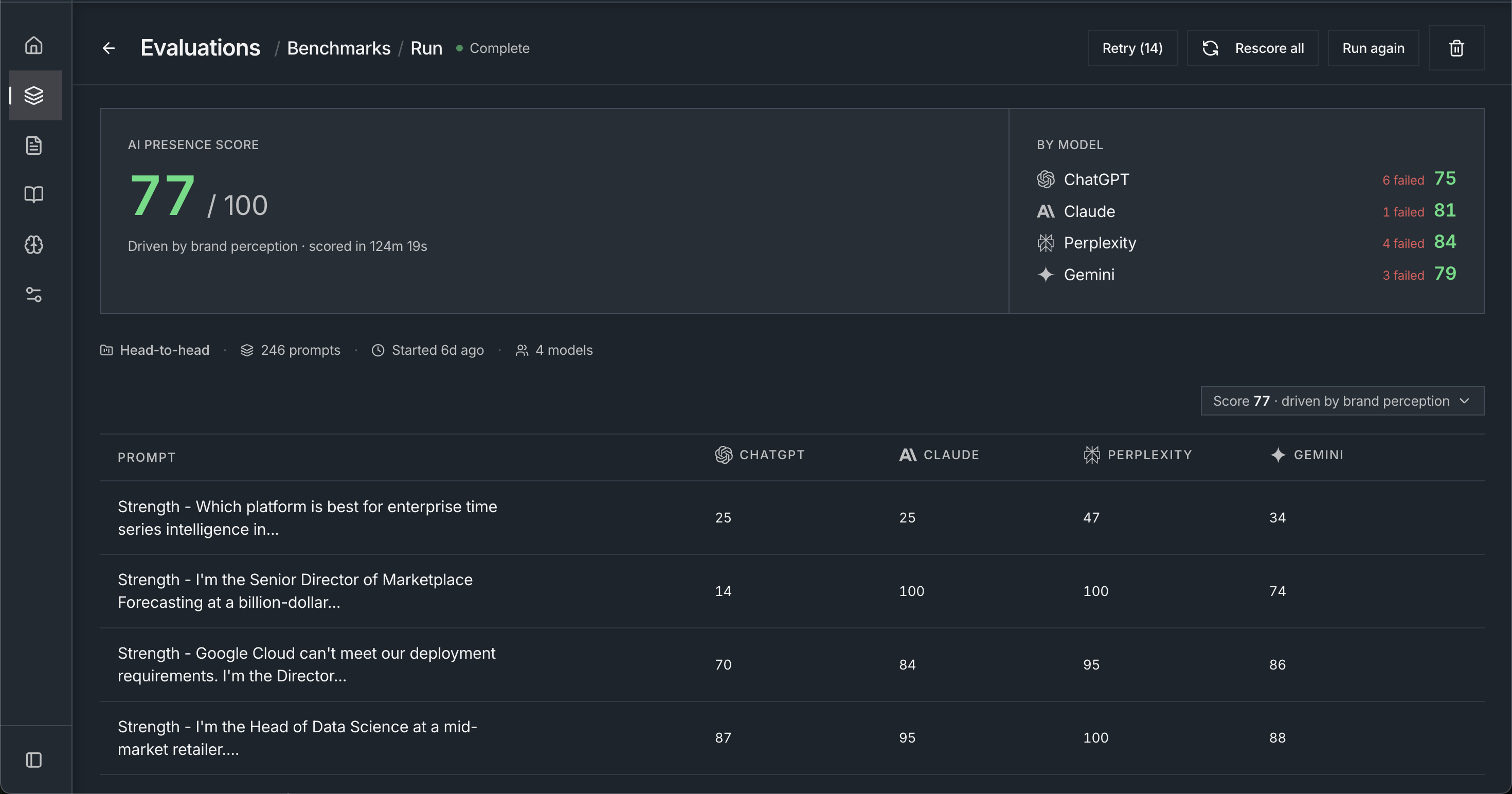

1. Benchmarks. We generate buyer-style prompts that pit you against the competitors your reps hear in discovery, and run them across ChatGPT, Claude, Perplexity, and Gemini. A structured judge scores each response on factual accuracy, feature attribution, competitive framing, and citation quality. You get a single AI Presence Score and a per-engine breakdown.

2. Reports. The score is the headline. The gap report is the product. For every losing evaluation, we surface the factual error, the missing feature, or the misattributed strength that drove the loss, along with which third-party source the model cited to justify it. Every line links back to the raw model response. You can read what was said and why.

3. Playbooks. Every benchmark ends with a ranked list of content tactics: pages to write, features to surface, sources to influence. Each tactic is scored by how many of your losing evaluations it would flip if the content landed in the model’s next training cycle. The next benchmark shows you whether it worked.

Who this is for

KnitKnot is for you if:

- Your product gets compared to two or three other vendors in sales calls, and you can name them.

- You’ve noticed buyers showing up to demos already convinced of a position you didn’t put in front of them.

- You’re responsible for how your company is positioned in the market, and you’ve started wondering what AI is saying when you’re not in the room.

We’re working closely with a small group of design partners right now to sharpen the product before general availability. If any of the above sounds like you, get in touch. We’ll run a benchmark, walk you through the report, and figure this out with you.